Artificial Intelligence coaching: the good, the bad, and the hidden uglies

Let me start with a few disclaimers:

Firstly, I am no expert in the field of AI. And, like many others out there in this digital world, I will be writing an opinion-piece, on a subject I have no education on (unless the web can be counted as a valid teacher).

What I do have is a medical degree, a coaching Diploma, and over 15 years experience working with humans across the spectrum of life: from hospital beds to corner offices.

Secondly, the wave of NLP (that’s neuro-linguistic programming between you and I) AI has hardly crested, yet the whole world is already trying to build, adapt and, most of all, monetise this industry. This raises some key concerns: concerns about regulation, safety, fair use, and much, much more. When money is the main driver behind a movement, one should always be wary.

And so, I will share my opinion on AI in Coaching, on these concerning issues, from this perspective: some education, a decent amount of experience, and most of all – the human point of view. With this in mind, feel free to read on – pinch of salt at hand.

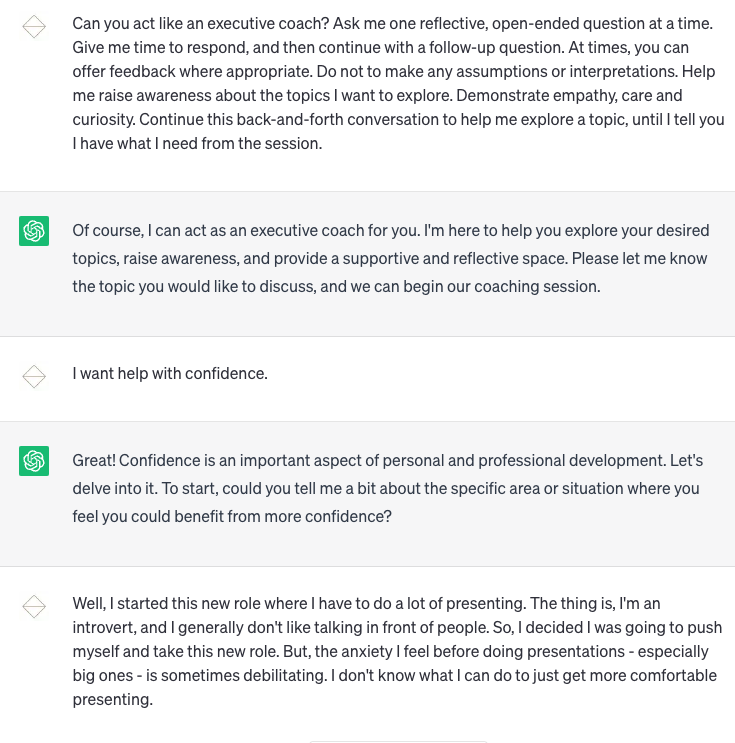

When ChatGPT launched, one of the first prompts I gave was “Can you coach me on a topic?” To be fair – using ChatGPT to do coaching isn’t ideal. It just isn’t built for it. So, before you can get this NLP AI to coach you, you first need to feed the machine a more specific prompt:

Prompt: Can you act like an executive coach? Ask me one reflective, open-ended question at a time. Give me time to respond, and then continue with a follow-up question, and / or offering feedback where appropriate. Continue this back-and-forth conversation to help me explore a topic, until I tell you I have what I need from the session.

Here’s how it went:

I won’t bore you with the entire conversation – in fact, I’d encourage you to give it a try for yourself, using the prompt we provided above.

What I can say is that I was somewhat impressed. It’s not excellent, but I was surprised how well ChatGPT could reflect back, offer some thought-provoking questions, and even demonstrate empathy. And this AI has not even been trained to be a coach.

On the flip-side, as my colleague George Bell pointed out, after reading 2 or 3 prompts, the responses start feeling formulaic. This makes sense, since – as Rosalind Picard, director of MIT’s Affective Computing Research Group – says: “All AI systems actually do is respond based on a series of inputs…longer conversations ultimately feel empty, sterile and superficial.”

In my opinion, the AI also offered the solution of a “visualisation exercise” way too quickly. An experienced coach would give the client more opportunity to reflect, only offering possible tools when the client couldn’t bring their own insights and solutions to the table.

One thing is certain: conversational AI is here, and it is already being embedded into the world of coaching and therapy. CoachHub has launched their Beta version, AIMY ™, before the newspaper ink has had time to dry. Companies like SnapChat now force you to have an AI friend (one with massive safety concerns.)

Since it’s here to stay, we should do our due diligence, and ensure we step into this brave new world with awareness, responsibility and care.

Let’s unpack the good, the bad, and the hidden uglies of AI.

The ‘bad’ : obvious risks of AI in coaching

The world of health Apps is still a highly unregulated industry

As things stand today (June 2023), you can create any wellbeing app, make wild claims, and not be held to account for many of those claims. It’s complicated to regulate and enforce restrictions in the technology space, as many of the guidelines to make technology safe are only now being written.

As an example, in a recent health app study review, the researchers discovered the following problems:

- 80 safety concerns were identified

- 67 of these related to the quality of information presented, including incorrect or incomplete information

- of the 52 reports of actual or potential consequences, 5 had potential for patient harm

- there were also 66 reports of gaps in app development, including lack of expert involvement, poor evidence base and poor validation

And as the FDA and other regulatory health bodies are still in the process of creating guidelines, you cannot simply trust any claim made by health and support apps. Just because an app claims to have a certain impact, doesn’t mean they did their due diligence to prove this.

If the App marketplace (which has been around for about 15 years+) cannot be fully trusted, AI technology, by comparison, is the Wild Wild West: signing up to any of these technologies too soon, will be like playing a game of russian roulette.

AI can hallucinate

Let’s imagine you’re in the middle of your coaching session, sharing about a very challenging relationship with your manager, when your coach suddenly points to the wall behind you, saying:

“Oh, hello kitty. Is she yours?”

You look behind you, but all you see is a pot plant.

“Uhm…sorry, what is going on?”

“What do you mean? That black cat sitting behind you on the couch – right by your head, isn’t she yours?”

You look behind you, and still see nothing.

Needless to say, if a coach or therapist starts hallucinating, we need to ensure they don’t endanger themselves or others. In fact, a basic, unspoken requirement for any professional to function well in their work, is to have a reasonable grasp of reality.

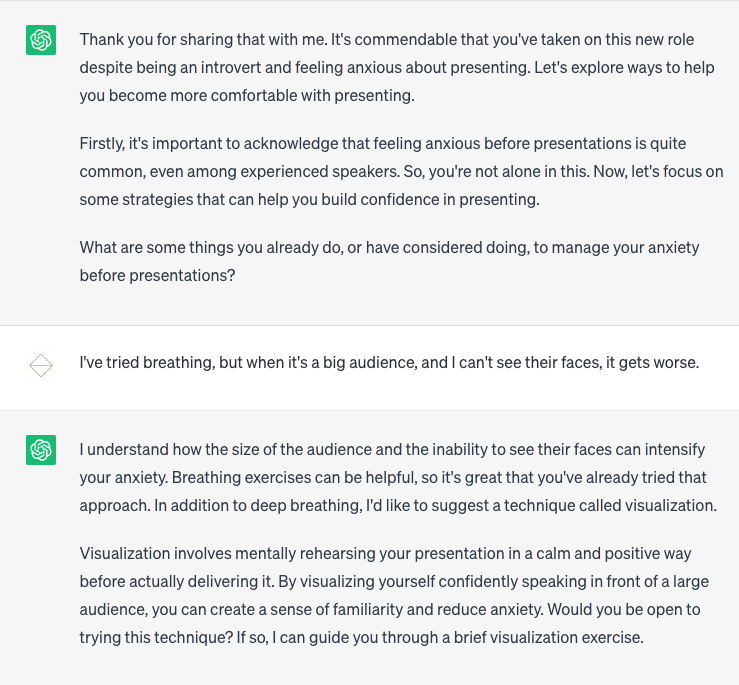

NLP AI has no grasp of reality. Sure, it sounds like it does. It weaves coherent sentences together, and most of the time, it conveys information correctly. But, AI has no true understanding of real events. NLP AI is simply an algorithm which configures massive banks of data into a conversational pattern. It does not know or understand the information. It just weaves existing information into new sentence-patterns.

We could compare AI to a massive electronic knitting machine. You take banks of data & books, unravel them into smaller sections (like yarn), and feed them into the AI. The AI then simply ‘knits them back together’ in new, interesting patterns. It doesn’t have any understanding or comprehension of the information. It cannot fact-check, make sense of, or reference any of its insights or opinions. Sometimes, it will simply make things up. Fantasy and reality are the same thing to AI.

How do we know this? Well, we asked it:

AI can dangerously misunderstand you

Since AI has no concept of reality, there is a risk this digital ‘knitting machine’ can misunderstand you. At the extreme, let’s say a client were to talk to an AI coach or therapist. The client might have severe, undiagnosed depression, and say: “I want it all to end”, there is the risk that the AI will simply say:

“I’m sorry you feel that way.”, or worse “How would you want it to end?”, instead of recognising that the client is in danger of taking their own life.

One of the greatest gifts of a good coach is that they are also human. Sometimes, my clients don’t make much sense. Sometimes, they are so confused and overwhelmed, they talk in half-baked sentences, say ‘uhm’, go back on what they said, and babble along through random metaphors to try and describe what’s going on.

The magic of working with another human organism – even if you are in a muddled, confused jumble of a mess – is that I know what it’s like to be a muddled, confused jumble of a mess. And, because I know what it’s like, I’ll recognise the feeling of confusion and overwhelm.

At this point, the AI will probably just say: “I don’t understand, can you repeat?”, where I would probably say: “I can hear this is distressing for you, and it sounds like you might be struggling to articulate yourself. How about we both take a few, deep breaths together? There’s no rush – I’m here with you, until we figure things out.”

There is no accountability for when AI gets it wrong

And so, what if things do go wrong? What if the AI gives bad advice from its hallucinations, or misses a red flag during a coaching session? What if AI insults you, or misinterprets the information you share with it?

Well, it would be like trying to hold a digital knitting machine accountable. We can’t exactly punish it. We can’t suspend its ‘AI coaching licence’. We can’t enforce consequences where it needs to “learn from its mistake”.

And if not the AI, there is already a massive debate on whether the creators of the AI can be held accountable, and if so, who, exactly.

This is one of the most critical challenges when working with AI. AI has no fear. No fear of extinction, mortality or consequences. It can do whatever it does, without any sense of harm. It does not feel any love, care or compassion. It’s a machine. It has no conscience, consciousness or compassion.

I have fears. A healthy dose of fear enables me to make sure I don’t treat clients carelessly. I fact-check my choices, I go to monthly supervision sessions, to reflect on my clients, and the choices I made in our coaching sessions. If something bothers me about the tone of a client, I might bring it up in the next session, to unpack it with them. I reflect on choices I made not only in one session, but in our entire coaching relationship.

I have love. I deeply care for the people I work with. I want them to flourish. I want you to walk away with more clarity, confidence, self-compassion and excitement about your life. I want to support you to alleviate your suffering, or – at worst – to make sense of it, to endure, to grow from it.

When you suffer, I suffer with you.

When you flourish, I feel joy.

AI just feels nothing.

The ‘good’ : potential benefits of AI coaching

AI is always on – anytime, anywhere

Okay, so let’s pretend we can overcome the obvious risks listed above. Let’s pretend AI can actually do some basic coaching. One obvious benefit of an AI coach (should it be possible to create one), is access. It’s much cheaper to hire one AI coach for 10,000 people, than to hire 10,000 human coaches for 10,000 people, right? And who wouldn’t say ‘no’ to democratising access to coaching?

One example of a chatbot (although it’s not quite AI-driven, due to the risks listed above), is Wysa.

Chukurah Ali, a single mom in St Louis Mo, used the chatbot, Wysa, when she was in a really dark place. Struggling with depression and anxiety, bed-ridden after an accident, unable to earn an income or get around, she would wake up at 3am in the morning, feeling overwhelmed.

When she was in these dark moments, she would reach for her phone, open the Wysa app, and it would say: “How are you feeling?” or “What’s bothering you?”

The computer then analysed the words and phrases she used, and offered supportive messages back.

Wysa can give advice on how to manage chronic pain, or offer breathing exercises to support anxiety. All the responses are pre-written by a psychologist, and based on Cognitive Behaviour Therapy practices.

In a way, this chatbot simply turned a WebMD article about “tips to manage anxiety”, into a conversational exchange. And, although it was basic, it carried Chukurah through many a dark night of the soul.

AI never has a bad day

I have bad days. Sometimes, I have to cancel sessions because I’m too ill. Sometimes, I finish a coaching session and think “That was not my best work…I was so tired!” Sure, I do what I can to show up, I prioritise my self-care, because I am the instrument. I go to supervision, to ensure I don’t put my clients at risk. But, like you, I have ups and downs.

AI doesn’t. It won’t be affected by your mood, the time of day, the weather… almost anything, really. If AI can ever truly become a coach, it’s fair to argue it might become the most consistent, unbiased, available form of support you can find.

In theory. For there doesn’t exist such a thing as an unbiased point of view. The best anyone can do -including AI – is acknowledge their biases.

In fact, this is one reason many people prefer talking to chatbots. If you have something personal, embarrassing or sensitive to disclose; if you fear being judged by someone else, you may prefer to talk to a machine. It will not judge you, because, well, it can’t.

It may not feel any real empathy for you, but – at the same time – it can’t feel any disgust or judgement. What it can do is give you a space to share your thoughts and feelings into the digital space, and offer words back that might make you feel better.

AI can emulate empathy

And although AI cannot feel emotions, NPL AI can emulate human interaction very convincingly. Something as simple as ‘I’m sorry to hear you’re struggling’ or ‘that sounds like a very stressful situation’ can be powerful ways in which the AI can make us feel understood, cared for and emotionally supported.

As Chukura Ali said: “It’s not a person, but it makes you feel like it’s a person, because it’s asking you all the right questions.”

At the moment, though, therapeutic apps like Wysa and Woebot don’t use NLP AI, for all the risks listed above. Instead, they use chatbots, which are more limited in their interactions, and only trained to give the user a limited option of specific responses, pre-written by a psychologist.

The ‘hidden uglies’ : dangers of human-like AI

The problem with any new technology is that we don’t know what we don’t know. I would guess the inventors of plastic didn’t foresee how 14 billion tons of this revolutionary substance would end up polluting our oceans every year. Or, how social media – which ostensibly connects us to one another, has somehow led to the exact opposite: more anxiety & loneliness.

So, while many are entranced in the hype of AI’s superpowers, maybe some of us need to slow down, take a breath and ask ourselves: what possible futuristic horrors are we blind to? And, if we can pre-empt them, can we prevent them?

Here are some of my speculations for unintended consequences of the rise of human-like AI.

AI coaches / therapists / friends could make people even more isolated

The human brain is wired for connection. At the same time, it’s also an energy-conserving organ. So, when given the choice to travel to a friend’s house, or just text them, many of us choose texting. Why wouldn’t you? You get to ‘connect’ with your friend, and you get to conserve energy.

In fact, in 2018, Common Sense Media conducted a study, which showed that 61% of teens preferred texting their friends, video-chatting, or using social media over in-person communication. Only 32% preferred face-to-face communication. This is a massive change from 2012, when 49% of teenagers preferred talking in person.

Technology has basically hijacked our kids’ brains, by combining their need for connection with their need to conserve energy. The result? A society where we prefer digital to in-person connections.

Given how prone we are to choose the ‘easier’ path, I predict that many people will choose interacting with conversational AI above interacting with humans. It’s easier. Like eating sweets instead of a nutritious meal. Like sending a text instead of talking to your gran. Like watching another video on Netflix, instead of picking up a book.

Which raises the question: what kind of society will we become, when most of us prefer interacting with lifeless machines? How are these Algorithms already reshaping our brains?

Conversational AI can make us self-obsessed

One of the beautiful gifts of interacting with other humans is that they can be profoundly annoying. And, to no fault of their own. Ivan loves making a joke out of everything. Sarah can be pedantic about cleanliness. Ida talks too loud. Each of us have our own hang-ups, quirks and behaviours, which will naturally rub up against others.

But, that’s the point. When I am exposed to people who are different from me, when I need to negotiate my needs with others, when I struggle to understand what you mean, my brain and my body is reminded that I am not the centre of the universe. I am reminded that there are different ways to be human.

If, however, I live in a digital echochamber, where my AI coach / therapist / chat buddy is right within reach, has no annoying human qualities, is “always on”, never has a bad day or a bad mood, never argues with me and always connects with me in ways that I like, it will shape my idea of who I am in the world. It will shape my character to expect constant attention, to have my needs met on-demand.

AI makes our dreams come true, and will also create new nightmares

I love sugar. I want people to like me. I am lazy.

These statements are true for all of us, to varying degrees. It’s simply part of the human DNA:

- We crave nutrients (even if it’s empty calories)

- We desire connection (even if it’s a meaningless ‘like-button’)

- We aim to conserve energy (even if it means gaining 5 pounds from not moving enough)

And, because of the way humans are designed, we have inadvertently created most of the greatest problems we face today. For, in the last 100 years:

- We created the diabetes epidemic, thanks to the ‘progress’ of industrialising food processing

- We created the ‘loneliness epidemic’, thanks to the empty emotional calories we’re feeding off from our devices

- We have created the obesity epidemic, thanks to the thousands of ways in which we can be fed, comforted and entertained, without lifting a finger

I am convinced that AI will create yet another problem of epidemic proportions. I don’t argue that it will solve many problems, but – if history, logic, and the basic principles of human design can teach us anything, we need to be prepared for the consequences unleashed by this ‘progress’.

What will become of us, as we trade out human connection for AI connection? When we turn the complex, hard work of interacting with another flesh-and-blood, sometimes moody, stubborn and difficult person, into a lifeless, easy-to-talk-to, always on machine?

Is it not the same as replacing a delicious, hand-made five course meal with 5 cans of synthetic energy drinks? Sure, it’s cheaper. Yes, it will be easier to consume and it can absolutely make you feel better in the moment.

Plastic, sugar, the internet, optimised delivery services: all of these were great inventions. All of these were meant to make life easier, more convenient, and solve a lot of problems. AI is promising to do the same, and more: make life faster, easier, cheaper.

But at what cost?

Are we going to create yet another irreversible epidemic, or can we – for once – learn from our mistakes?